Tales of the Scoreboard

Tales of the Scoreboard

Tales of the Scoreboard

Share

Success Shows On The Scoreboard

I don’t know about you, but I love various sports to varying degrees. For me, my favorites are US college football, US professional football, and many of the Olympic sports — especially the Winter games. Who knew that sliding granite stones on ice while yelling at someone sweeping with a broom could be so captivating?!

I also remember growing up and watching the Wide World of Sports on TV. Such a great opening “the thrill of victory and the agony of defeat!” While this may be a more US based TV experience, the image of that ski jumper crashing spectacularly is known the world over. I must admit, some days in the world of Customer Success, we land gracefully and other days it’s a spectacular ski crash like in the opening sequence above…

One thing in common with all sports is there must be some sort of measurement or score. Sometimes those scores may vary by the day or the conditions or circumstances; however, all in all, the scores generally show over time to be indicators of who are top performers and who still must refine their skills. In that sense, selecting a Customer Success Platform (CSP) isn’t too far from an athletic event. The game is the same, but the players and their relative skills and approaches vary. No matter the competition, scores are vital to determine who is the best on the field.

In our pursuit of a CSP, we knew the values and deliverables needed. For our needs, we knew we needed a flexible, affordable, reliable, manageable, and scalable (FARMS) solution. See here for some of my previous articles on the qualities that we were seeking.

“Just like in all sports, not all parts of the game are necessarily mastered even by champions. But, those who master the parts that matter the most without ignoring their weaknesses are most likely to win.”

Success Shows On The Scoreboard

I don’t know about you, but I love various sports to varying degrees. For me, my favorites are US college football, US professional football, and many of the Olympic sports — especially the Winter games. Who knew that sliding granite stones on ice while yelling at someone sweeping with a broom could be so captivating?!

I also remember growing up and watching the Wide World of Sports on TV. Such a great opening “the thrill of victory and the agony of defeat!” While this may be a more US based TV experience, the image of that ski jumper crashing spectacularly is known the world over. I must admit, some days in the world of Customer Success, we land gracefully and other days it’s a spectacular ski crash like in the opening sequence above…

One thing in common with all sports is there must be some sort of measurement or score. Sometimes those scores may vary by the day or the conditions or circumstances; however, all in all, the scores generally show over time to be indicators of who are top performers and who still must refine their skills. In that sense, selecting a Customer Success Platform (CSP) isn’t too far from an athletic event. The game is the same, but the players and their relative skills and approaches vary. No matter the competition, scores are vital to determine who is the best on the field.

In our pursuit of a CSP, we knew the values and deliverables needed. For our needs, we knew we needed a flexible, affordable, reliable, manageable, and scalable (FARMS) solution. See here for some of my previous articles on the qualities that we were seeking.

“Just like in all sports, not all parts of the game are necessarily mastered even by champions. But, those who master the parts that matter the most without ignoring their weaknesses are most likely to win.”

Success Shows On The Scoreboard

I don’t know about you, but I love various sports to varying degrees. For me, my favorites are US college football, US professional football, and many of the Olympic sports — especially the Winter games. Who knew that sliding granite stones on ice while yelling at someone sweeping with a broom could be so captivating?!

I also remember growing up and watching the Wide World of Sports on TV. Such a great opening “the thrill of victory and the agony of defeat!” While this may be a more US based TV experience, the image of that ski jumper crashing spectacularly is known the world over. I must admit, some days in the world of Customer Success, we land gracefully and other days it’s a spectacular ski crash like in the opening sequence above…

One thing in common with all sports is there must be some sort of measurement or score. Sometimes those scores may vary by the day or the conditions or circumstances; however, all in all, the scores generally show over time to be indicators of who are top performers and who still must refine their skills. In that sense, selecting a Customer Success Platform (CSP) isn’t too far from an athletic event. The game is the same, but the players and their relative skills and approaches vary. No matter the competition, scores are vital to determine who is the best on the field.

In our pursuit of a CSP, we knew the values and deliverables needed. For our needs, we knew we needed a flexible, affordable, reliable, manageable, and scalable (FARMS) solution. See here for some of my previous articles on the qualities that we were seeking.

“Just like in all sports, not all parts of the game are necessarily mastered even by champions. But, those who master the parts that matter the most without ignoring their weaknesses are most likely to win.”

The Rules of the Game

As a quick refresher, our company's key value areas are:

Program Governance

Expansion of Services

Measurement of Success and Satisfaction

Financial / Total Cost of Ownership

Operation/Ease-of-Use (read more here).

Of course, these break out into a lot of different features/benefits within CSP solutions. So, we had to create a scoring system.

Just like in all sports, not all parts of the game are necessarily mastered even by champions. But, those who master the parts that matter the most without ignoring their weaknesses are most likely to win.

My daughter was a competitive diver in middle and high school. It is there that I learned about “degrees of difficulty.” Basically these are multipliers to rapidly up your score and entice you to push for those harder to perform maneuvers. For example, a backward flip with a rotation is a higher degree of difficulty versus a simple forward pike dive. Thus a mediocre backflip with rotation could score more points than a well executed pike. However, if you mess up badly enough, that multiplier against a zero or fractional point may not land you on the podium.

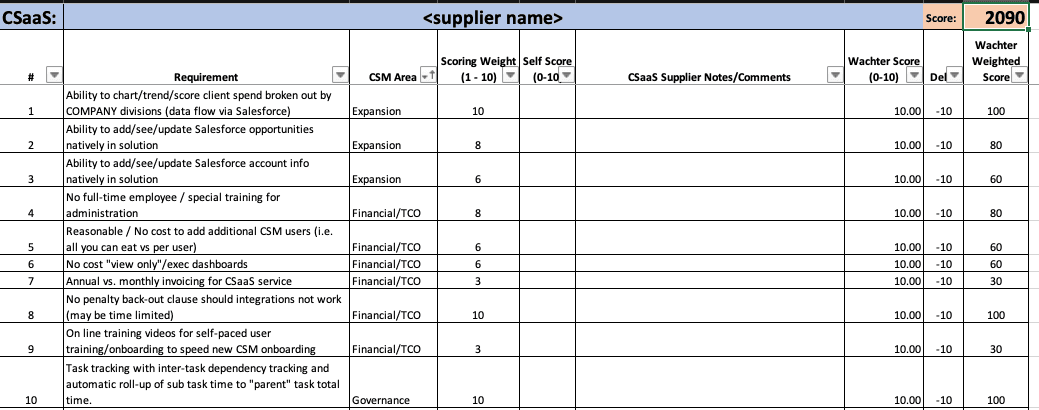

Knowing that not all aspects were the most important in our list of “must haves” and “nice to haves”, our team came up with a weighting factor. Each specific requirement could have a score of 0 to 10 and a multiplier of 1 to 10. So a very important item could land a CSP with 100 points (10 x 10); whereas, a “nice to have” item may only have a maximum of 30 (10 x 3). Enough misses on high potential items could quickly knock the CSP out of contention, but three or four medium scores might make up one major item. Not everyone is Ilia Malinin (the “quad god” US figure skater of the most recent Winter Olympics), but even he missed medal contention in several competitions by committing too many errors.

The Rules of the Game

As a quick refresher, our company's key value areas are:

Program Governance

Expansion of Services

Measurement of Success and Satisfaction

Financial / Total Cost of Ownership

Operation/Ease-of-Use (read more here).

Of course, these break out into a lot of different features/benefits within CSP solutions. So, we had to create a scoring system.

Just like in all sports, not all parts of the game are necessarily mastered even by champions. But, those who master the parts that matter the most without ignoring their weaknesses are most likely to win.

My daughter was a competitive diver in middle and high school. It is there that I learned about “degrees of difficulty.” Basically these are multipliers to rapidly up your score and entice you to push for those harder to perform maneuvers. For example, a backward flip with a rotation is a higher degree of difficulty versus a simple forward pike dive. Thus a mediocre backflip with rotation could score more points than a well executed pike. However, if you mess up badly enough, that multiplier against a zero or fractional point may not land you on the podium.

Knowing that not all aspects were the most important in our list of “must haves” and “nice to haves”, our team came up with a weighting factor. Each specific requirement could have a score of 0 to 10 and a multiplier of 1 to 10. So a very important item could land a CSP with 100 points (10 x 10); whereas, a “nice to have” item may only have a maximum of 30 (10 x 3). Enough misses on high potential items could quickly knock the CSP out of contention, but three or four medium scores might make up one major item. Not everyone is Ilia Malinin (the “quad god” US figure skater of the most recent Winter Olympics), but even he missed medal contention in several competitions by committing too many errors.

Tales of the Tape

So, what are the elements that our CSP “athletes” would be measured on? How many “data double axels” and “tech toe loops” must be performed to get that gold medal?

Just like the International Olympic Committee, we published the “rules”. We gave each respondent our score card system. Everyone knew what they were going to be measured in and how it was weighted. It looked a little like this:

The Process

We had 34 different measurement areas that each CSP had to respond to.

Each vendor had to supply a “self score” giving their understanding of how well they met that requirement. In our case, the maximum possible score was 2090 points. Within a set timeframe, each vendor (we moved forward to this phase with 5) had to supply this data back to us along with their pricing for consideration - realizing that they were competing with others going for that same 2090 goal.

In parallel, we requested either demo site access or full sandboxes to evaluate and score for ourselves. That access (live or in demo) allowed us to start scoring for “execution points.”

It also allowed us to see if our perception and the vendor’s perception matched or was far apart. Think of this as a “smoke and mirrors” filter if you will.

We've created a version of a vendor analysis to share. Make a copy here.

Tales of the Tape

So, what are the elements that our CSP “athletes” would be measured on? How many “data double axels” and “tech toe loops” must be performed to get that gold medal?

Just like the International Olympic Committee, we published the “rules”. We gave each respondent our score card system. Everyone knew what they were going to be measured in and how it was weighted. It looked a little like this:

The Process

We had 34 different measurement areas that each CSP had to respond to.

Each vendor had to supply a “self score” giving their understanding of how well they met that requirement. In our case, the maximum possible score was 2090 points. Within a set timeframe, each vendor (we moved forward to this phase with 5) had to supply this data back to us along with their pricing for consideration - realizing that they were competing with others going for that same 2090 goal.

In parallel, we requested either demo site access or full sandboxes to evaluate and score for ourselves. That access (live or in demo) allowed us to start scoring for “execution points.”

It also allowed us to see if our perception and the vendor’s perception matched or was far apart. Think of this as a “smoke and mirrors” filter if you will.

We've created a version of a vendor analysis to share. Make a copy here.

And the winner is….

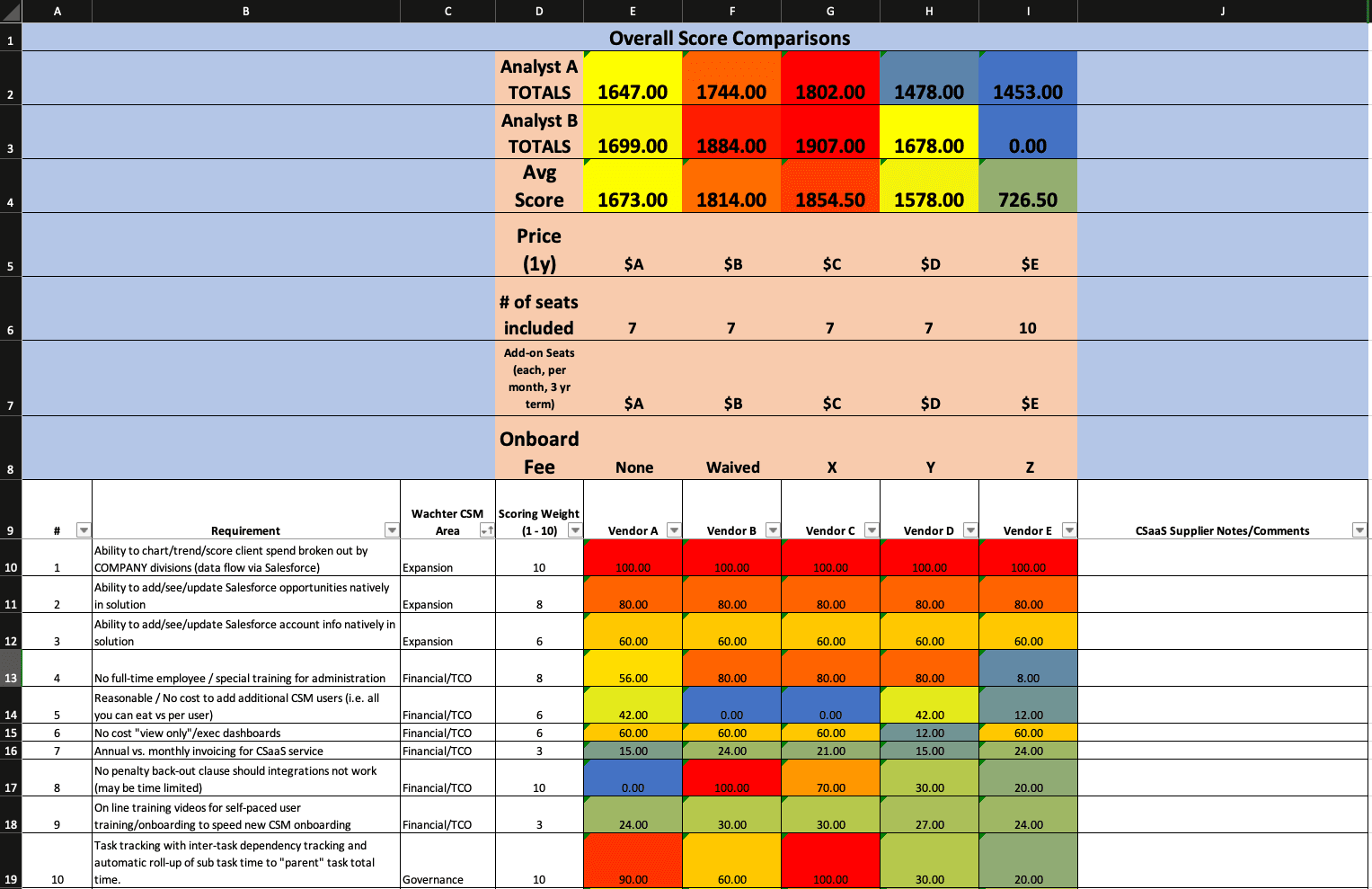

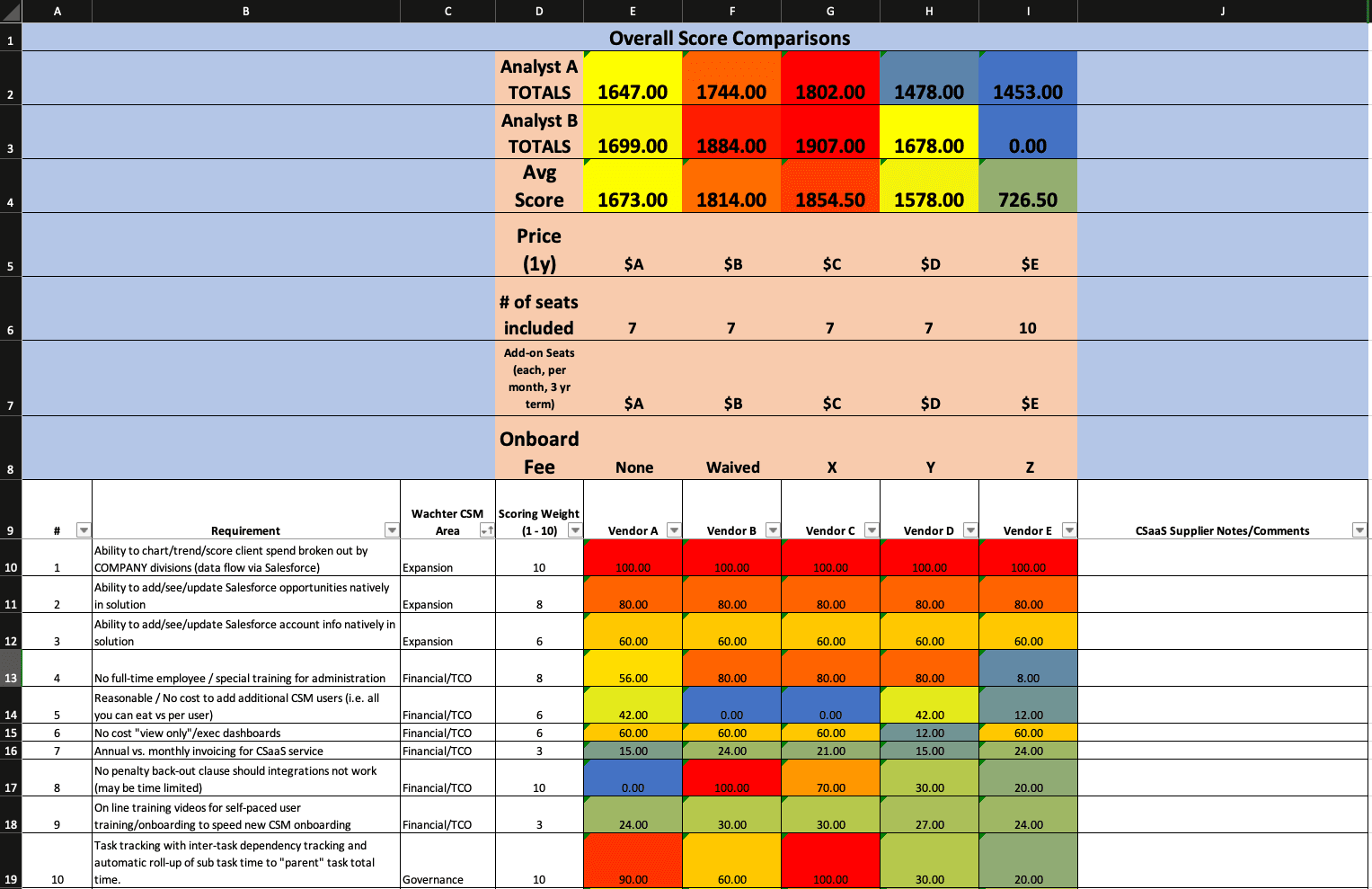

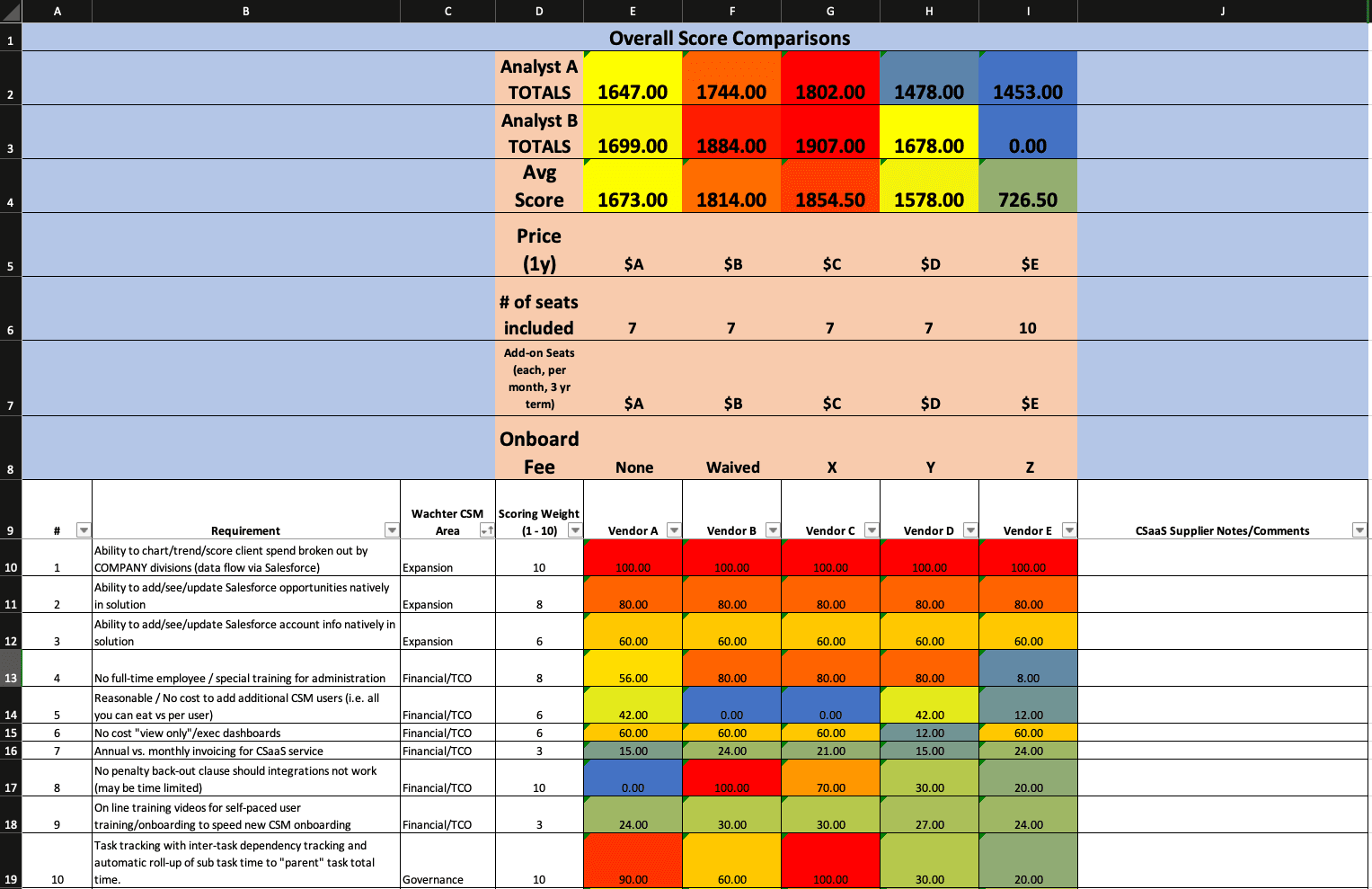

Unlike dive meets, we didn’t have special scoring software, but we did have the tech that runs the business world: spreadsheets with formulas and colors! So, after some creative application of filters, formulas, and fill colors, we ended up with this (truncated for brevity):

Reds indicated “hot” scores. In this case, red was good. Blues were “cold” and were missing the mark. In the end, we had two contenders, but one was the clear winner when combined with their initial and total cost of ownership (TCO). I will leave it to your imagination whom we chose.

One important note: We were well aware that even a “perfect” score that cost beyond our budget would limit a vendor’s viability; however, if they were the perfect fit, we could take the TCO to our leadership to get buy-in and additional budget.

“We don’t manage our clients without data (or at least we shouldn’t - hence CSPs even existing). Why would you make such a big decision without it? ”

And the winner is….

Unlike dive meets, we didn’t have special scoring software, but we did have the tech that runs the business world: spreadsheets with formulas and colors! So, after some creative application of filters, formulas, and fill colors, we ended up with this (truncated for brevity):

Reds indicated “hot” scores. In this case, red was good. Blues were “cold” and were missing the mark. In the end, we had two contenders, but one was the clear winner when combined with their initial and total cost of ownership (TCO). I will leave it to your imagination whom we chose.

One important note: We were well aware that even a “perfect” score that cost beyond our budget would limit a vendor’s viability; however, if they were the perfect fit, we could take the TCO to our leadership to get buy-in and additional budget.

“We don’t manage our clients without data (or at least we shouldn’t - hence CSPs even existing). Why would you make such a big decision without it? ”

And the winner is….

Unlike dive meets, we didn’t have special scoring software, but we did have the tech that runs the business world: spreadsheets with formulas and colors! So, after some creative application of filters, formulas, and fill colors, we ended up with this (truncated for brevity):

Reds indicated “hot” scores. In this case, red was good. Blues were “cold” and were missing the mark. In the end, we had two contenders, but one was the clear winner when combined with their initial and total cost of ownership (TCO). I will leave it to your imagination whom we chose.

One important note: We were well aware that even a “perfect” score that cost beyond our budget would limit a vendor’s viability; however, if they were the perfect fit, we could take the TCO to our leadership to get buy-in and additional budget.

“We don’t manage our clients without data (or at least we shouldn’t - hence CSPs even existing). Why would you make such a big decision without it? ”

Your sport, your rules…

These tactics were the way that we chose to set up our “game.” Your methods and rules may differ - and that’s ok. None of us are the sole holders of Customer Success “truth.” Just know that you need more than your gut instinct as a CS practice to make this decision. We don’t manage our clients without data (or at least we shouldn’t - hence CSPs even existing). Why would you make such a big decision without it? Go make up your version of the CSP Olympics. There are lots of versions of sports that use a round ball but still are unique in their execution. Just open up that part of you that made up crazy rules games on the playground as a child. Throw them all out there then chisel them down into a workable format. And, at the end, you’ll know clearly who belongs on the podium with the gold and who needs to go back to practice.

Your sport, your rules…

These tactics were the way that we chose to set up our “game.” Your methods and rules may differ - and that’s ok. None of us are the sole holders of Customer Success “truth.” Just know that you need more than your gut instinct as a CS practice to make this decision. We don’t manage our clients without data (or at least we shouldn’t - hence CSPs even existing). Why would you make such a big decision without it? Go make up your version of the CSP Olympics. There are lots of versions of sports that use a round ball but still are unique in their execution. Just open up that part of you that made up crazy rules games on the playground as a child. Throw them all out there then chisel them down into a workable format. And, at the end, you’ll know clearly who belongs on the podium with the gold and who needs to go back to practice.

Points to Ponder

If you were designing a scoring system, would it be different? How would you delineate the levels of importance to your organization?

Who would you invite to such a competition? How many vendors would you open the process up to?

What do you do if you have an incumbent vendor?

If your score and the vendor’s self score do not match, is it marketing fluff or is it a mismatch on the requirement?

Do you know what your TCO max is?

What if there is a tie?

Who are your “judges”?

How will you set the scoring system (consensus, leader specified, outside consultant)?

What/how much will you share with the respondents that do not win?

Have you weeded out things that are merely subjective but figured out the root importance that underlies it so that it can be measured?

Points to Ponder

If you were designing a scoring system, would it be different? How would you delineate the levels of importance to your organization?

Who would you invite to such a competition? How many vendors would you open the process up to?

What do you do if you have an incumbent vendor?

If your score and the vendor’s self score do not match, is it marketing fluff or is it a mismatch on the requirement?

Do you know what your TCO max is?

What if there is a tie?

Who are your “judges”?

How will you set the scoring system (consensus, leader specified, outside consultant)?

What/how much will you share with the respondents that do not win?

Have you weeded out things that are merely subjective but figured out the root importance that underlies it so that it can be measured?

Mike Hurd

Director of Customer Success

Wachter

Mike is a seasoned technology and customer success leader with over 20 years of experience driving customer retention and large-scale infrastructure solutions. As Director of Customer Success and Opportunity Engagement at Wachter, Inc., he oversees a team dedicated to enhancing satisfaction and delivering strategic customer success initiatives. Over his 17-year tenure with Wachter, Mike previously served as Director of Technology Services, leading enterprise technology architecture and consulting engagements for Fortune 500 clients. Earlier in his career, he held senior leadership roles including CTO at Emporos and Director of Operations at Harbor Technologies. Mike is recognized for aligning technical expertise with customer-focused business outcomes.